Sigth

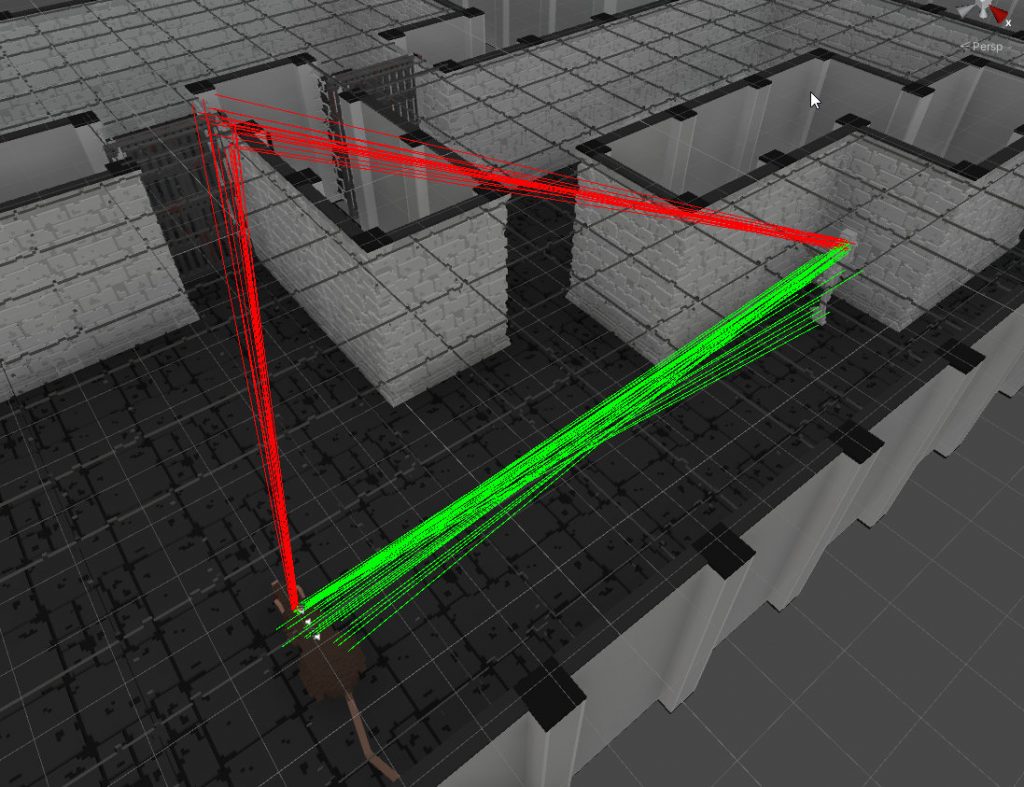

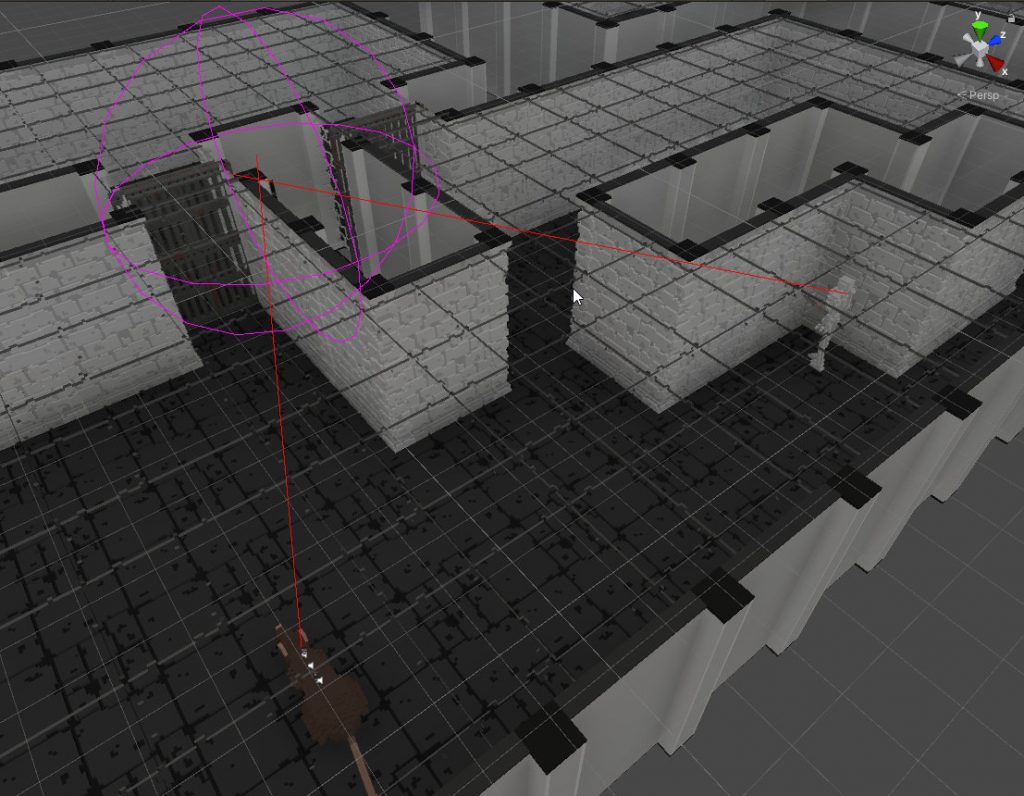

The sight or the visibility check is based on rays colliding with the physical representation of objects – colliders. Ray start is randomly generated inside a box placed on the head position of the observer. Ray end inside a box representing the target’s body. Casting rays from random positions makes nice probability to see the target if it’s partially covered by an obstacle.

Results of ray casts are stored to the memory of each actor. Together with the boolean result it is stored also the time of the ray cast, the target’s position and later maybe also a state of the target (like what he had in his hand or what was he doing at the time of successful visibility check). My AI actors check both friends and enemies.

Hearing

I’ve implemented a simple ‘noise engine’. It’s basically a manager that holds all noises represented as spheres in the game world and keeps them ‘alive’ during a short time period. An AI actor may check anytime whether there is any noise sphere colliding with his head or not. Result is stored to the memory together with the time and other important data about detected noise.

Each AI actor has a tunable constant that represents his level of deafness. Since the intensity of the noise decreases by the distance from the source it is very easy to check it against the level of deafness to determine whether the noise was heard or not.

That brings me to the end. Next part will be about behavior threes and decision making.